Disease Screening & Base Rate Fallacy #

Definition #

The base rate fallacy refers to the neglect of prior probability of the evidence that supports the conditional probability of a hypothesis. (Based on Wikipedia)

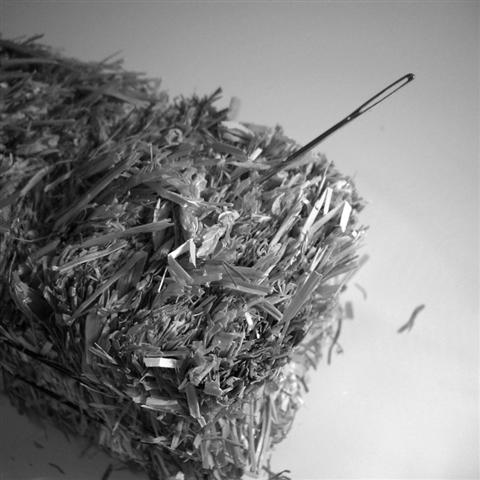

(Photo: Amy at Flickr)

Commentary #

A recent example is the controversy about breast cancer screenings.

Imagine that about 1% of women (1 in 100) have breast cancer. You have a diagnostic test that correctly detects cancer 85% of the time (i.e. if the test is given to 100 women with cancer, it catches 85, but misses 15 of them).

Also, the test sometimes incorrectly detects cancer (when none is present) about 10% of the time (i.e. if the test is given to 100 women without cancer, it accidentally tells 10 of them they have cancer, but correctly tells the other 90 they don't have cancer).

Now the tricky bit: Imagine we give the test to 1,000 women in the population. If the test says a women has cancer, what is the probability she actually has cancer?

This question is hard for many people (including doctors!) because it's hard to make the trade-offs in our head about whether or not the test is accurate for this particular woman. Here's how you would do the calculation correctly:

-

Based on the rate of cancer in the population (1%), how many of the 1,000 women tested do we expect to have cancer?

Answer: About 10.

-

Of those 10 who have cancer, about 9 will be told they have cancer, and 1 will missed.

(Recall, the test only catches 85% of cancers.) -

Now of the remaining 990 women who don't have cancer, about 99 of them will be told they have cancer (10% false-alarm) while the rest (891) will be correctly told they don't have cancer.

-

So how many women are told they have cancer?

Answer: 9 + 99 = 108.

-

How many of those women actually have cancer?

Answer: Just the 9.

-

So if you're told you have cancer, what's the chance you actually have cancer?

Answer: 9 / 108 = 8.3%

Pretty strange, right? What about the people who are told they don't have cancer? What's the probability you actually do have cancer?

-

How many women are told they don't have cancer?

Answer: 1 + 891 = 892.

-

How many of those actually have cancer?

Answer: Just the 1.

-

So if you're told you don't have cancer, what's the chance that you actually do have cancer?

Answer: 1 / 892 = 0.1%

That means that it's pretty unlikely for you to have cancer if the test says you don't.

The reason this occurs is because the number of women who have breast cancer to begin with is not that high (10 of 1,000). Therefore, the mistakes the test makes start to matter when applied to the entire population.

Naturally, this has policy implications: if you test more and more people, a large percentage of people will be told they have cancer when they don't— leading to more invasive testing that has other real side-effects. The trick is either to try to test a high-risk subpopulation (where the prevalence rate is higher) or to improve the test by reducing its false-positive rate.

See Also #

-

Screening for disease and dishonesty at Understanding Uncertainty for an excellent interactive representation of base rates.

-

Screening for Breast Cancer at the U.S. Preventive Services Task Force for a summary of its recommendations.

-

The base rate fallacy reconsidered for a counter-argument about base rate neglect.